It’s too early against the Russians! Artificial intelligence of American aircraft

In a surprising move for the times, the US Air Force made a breakthrough in air combat when it announced last week that its X-62A test aircraft, powered by an artificial intelligence (AI) brain, had gone head-to-head with a manned fighter jet. in a simulated air battle. The battle was simulated, yes, but in real airspace, and not simulated by a computer.

While this is indeed a great achievement, there are still many significant obstacles to fully realizing such a thing as a plane controlled by an artificial brain. This is still not the Senate or the State Duma, it is much more complicated. The main difficulty is the artificial intelligence’s understanding of three-dimensional space and its location in it. This is the main problem today, and if it is solved, the US Air Force will be able to make air combat a reality using AI. And other autonomous tasks in the air will be performed more easily.

Autonomous AI systems for controlling aircraft during air combat are a fantasy that they want to make reality.

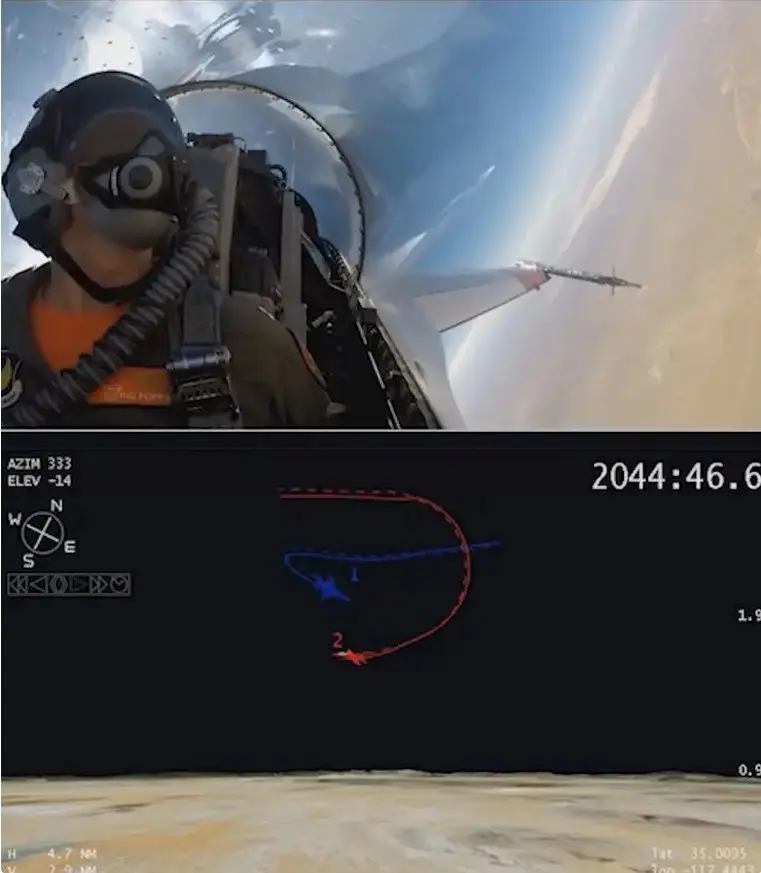

There have been many live tests, but to summarize briefly, last September the X-62A test aircraft, a highly modified two-seat F-16D Viper, also known as the Variable Stability Simulator Test Aircraft (VISTA), met for the first time in the sky with a manned F-16.

The X-62A conducted dogfights in fully autonomous mode using software based on artificial intelligence and machine learning, although the pilot remained in the cockpit at all times as a safety measure. Still, an airplane is not a cheap thing.

The flight tests were conducted under a program called Air Combat Evolution (ACE), which is led by the well-known Defense Advanced Research Projects Agency (DARPA), but also includes the US Air Force, as well as several private contractors and scientific institutions. .

The unique X-62A Variable Stability Test Aircraft (VISTA) flew in fully autonomous mode against a manned F-16 fighter jet in a landmark dogfight training event in September 2023. US Air Force

Overall, the organizers of this show were pleased with the results. Defensive and offensive maneuvers were practiced, and the culmination of all were high-level nose-to-nose battles, when planes approached at short distances and high speeds and maneuvered, simulating a “dog dump.”

Despite more than a century of military achievements aviation, dogfight remains an event in which the pilot's direct judgment, intuition and three-dimensional vision are critical. The aircraft's sensor suite, including radar, electro-optical and infrared cameras, as well as electronic warfare and support systems, can provide a wealth of data on enemy contacts. However, their usefulness is steadily diminishing, if not disappearing, as aircraft come into ever closer contact.

The radar in the nose of an airplane, for example, can only “see” what is in the cone-shaped area in front of it. Even existing 360-degree camera systems have XNUMXD limitations and can be limited by environmental conditions. Information linked to data from external sources can be extremely valuable in enhancing situational awareness or even targeting weapons, but it also has limited accuracy. External traces of enemy and friendly fighters can merge together at very close range.

“Lost sight - lost the battle” is a common saying from American pilots during the Second World War, which, oddly enough, is still relevant today. But it's especially important for AI-controlled aircraft, which need high-quality telemetry to know where they are in relation to enemy aircraft. A real enemy will be very reluctant to cooperate in providing this type of information, and artificial intelligence is not able to compete with the human brain in terms of analyzing incoming information and making decisions based on it in battle.

Not surprisingly, there are serious caveats to the very important phase of autonomous air combat. DARPA experts have repeatedly described how so-called "autonomous agents" loaded into the X-62A mission systems maintained overall situational awareness during dogfights. The picture that emerged was one in which AI-driven algorithms had full situational awareness during DARPA's AlphaDogfight test, which ended in 2020 and fed directly into ACE. True, these tests, AlphaDogfight, took place in completely simulated conditions.

But DARPA understood that the program and the simulated space are one thing, but reality is another. And the first will never replace the second. Therefore, in the end, both the F-16, piloted by a man, and the F-16, which is VISTA, met in flight. And the main task was to create an “observation space”, that is, data transmission and reception channels between aircraft, so that information about the position of a conventional aircraft was received on the VISTA platform, and then, as necessary, transmitted to other agents in the created observation space.

Agents, or also called “autonomy agents,” are, first of all, aircraft control and situation analysis subsystems. Work with them has been going on for a long time, but so far American engineers have not achieved such tangible progress that they can make any statements. And there are still more questions than answers, but work is underway.

According to those working in the program, there are a large number of variables that affect the operation of aircraft systems, and first it is necessary to understand how an aircraft with AI works in a complex, with an understanding of all aspects. There are too many differences in the actual operation of systems from the simulated conditions.

The gap between reality and the simulation environment creates many problems in the security environment.

With so many unknowns in this first air combat, the main focus was on ensuring the X-62A was capable of autonomously performing a variety of missions. Moreover, one of the first tasks was precisely to obtain the largest possible amount of “edible” data about the environment by the aircraft’s systems.

DARPA and the Air Force have repeatedly emphasized that ACE's primary goal is to build trust in the autonomy of artificial intelligence. Developing the necessary technologies and capabilities for an autonomous aircraft capable of performing such maneuvers and tasks has much broader implications.

There is also a question of practicality. The X-62A simply does not yet have any organic sensor suite that would give it the continuous, so to speak, XNUMX-degree situational awareness that would be required for truly autonomous air combat.

Circular, 360 degrees - this is not entirely correct, the plane in flight is in a ball of three-dimensional space, so there are a little more degrees there. And there should be more sensors. And they must look further.

This is something that needs to be addressed when it comes to developing future autonomous platforms. Arrays of small conformal radars, electro-optical or infrared cameras and other sensors can be used to provide the necessary situational and spatial data, essentially working together to create telemetry to create a solid digital 3D "picture" of what is happening immediately around the aircraft during a fast-moving air combat.

Distributed network of sensors, including on separate drones, operating in a cooperative swarm, as well as on other remote platforms, can also be used to create a more complete situational picture.

That is, a lot of smart and modern electronics must sooner or later learn what a person does with one turn of his head, looking around and instantly drawing a conclusion about what is happening in the space around the aircraft. And reacting accordingly.

The aviation industry, as represented by commercial aviation, and the military portion of that industry, have made significant advances in automated “sense and avoid” capabilities over the past couple of decades, including when it comes to unmanned platforms. Some of these technologies could be transferred to solve the problem of air combat, especially when combined with a much more dynamic "thinking" AI agent framework that benefits from deep machine learning. Even the sensors and software models used for self-driving cars can be used to help better understand what's going on around a combat drone engaged in this kind of combat. Prospect? Yes.

What needs to be clearly understood here is that simply installing something like an array of simple cameras (optical and IR) around the aircraft may not provide the required 3D situational awareness to reliably implement autonomous air combat capabilities. 2D data does not provide complete information about the aircraft's position, although some of it can be emulated in software using machine learning into a 3D coordinate system. However, 3D data will be of greatest value for such combat applications.

“The position in space of the mission in which you operate the aircraft or are initially deploying is a critical issue that we must solve in the airspace.”, said Lieutenant Colonel Hefron, responsible for developments at ACE. The head of ACE acknowledged that his program is not the only one aimed at overcoming these problems, and particularly focused on a separate Air Force project, VENOM (Viper Experimentation and Next-Gen Operations Mode).

A total of six F-16s are being modified under Project VENOM to support further research and development into autonomous flight. These efforts will also allow for more experimentation with multiple autonomous platforms working together.

One of the first F-16s to be converted into an autonomous test bed as part of Project VENOM

ACE and Project VENOM are among a wide range of programs and activities that contribute to the Air Force's broader vision of future autonomous capabilities, particularly the Collaborative Combat Aircraft advanced unmanned aerial vehicle program. The rest of the US military is also increasingly interested in new and evolving autonomous capabilities that extend beyond the airspace. All this could have implications for the commercial aviation sector as well.

Overall, after last year's breakthrough dogfight, significant challenges clearly remain, especially when it comes to allowing an AI-piloted fighter to successfully engage a real enemy. It will be very interesting to see what milestones ACE and other autonomous R&D efforts reach next, and solving this problem will undoubtedly be high on their priority to-do lists.

The headline may have already provoked many to think: what does this have to do with us? Excuse me, who are all these future programs against? Against Iran, whose air force has fifty-year-old planes? Or against the DPRK, where the MiG-17 and MiG-19 are still in service? Currently, the United States has two sane adversaries that are not so easy to deal with: China and Russia. And if China will take quantity, then, excuse me, we will take only quality.

However, the development of air defense systems has already led to the fact that often the pilot and aircraft in the air defense coverage area are potential victims. And even if we take the air defense statistics, it is a great honor that the aircraft on both sides of the front were shot down by air defense.

Air combat is a rarity today, but a well-trained pilot has become an even more valuable resource. Therefore, the desire to “plant” a powerful computer in the cockpit that can analyze the situation around and make a decision is normal. This is commendable, because in the future such devices can be thrown at the enemy, just as cruise missiles and Shaheeds are sent today - without particularly taking into account losses.

This is a slightly vile nation - they want to fight and win, but without losing their own. Preferably - absolutely. However, this has been known for a long time, which means that it is worth waiting for the development of this topic in the future. As they say, consistency is a sign of mastery.

Information