Artificial Intelligence. Part One: The Path to Superintelligence

The reason this (and others) article came into being is simple: perhaps artificial intelligence is not just an important topic for discussion, but the most important in the context of the future. All those who get at least a little into the essence of the potential of artificial intelligence, recognize that it is impossible to ignore this topic. Some - including Elon Musk, Stephen Hawking, Bill Gates, not the most stupid people of our planet - believe that artificial intelligence represents an existential threat to humanity, comparable in scale to the complete extinction of us as a species. Well, sit back and put all the dots for yourself over i.

“We are on the verge of change, comparable to the birth of human life on Earth” (Vernor Vinge).

What does it mean to stand on the threshold of such changes?

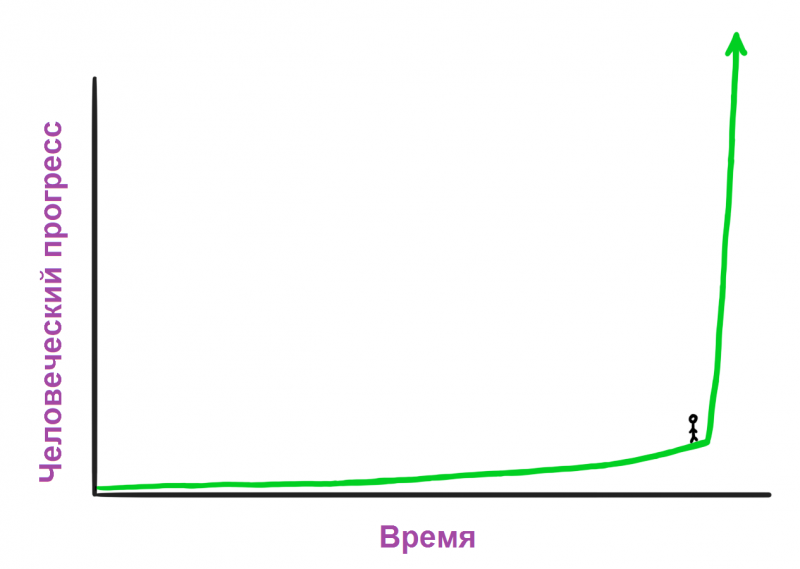

It seems to be nothing special. But you must remember that being on a chart in such a place means that you do not know what is on your right. You should feel like this:

Feelings are quite normal, the flight is successful.

Future is coming

Imagine that a time machine took you to 1750 - at a time when the world was experiencing constant interruptions in the supply of electricity, the connection between the cities implied shots from a cannon, and all the transport worked on hay. Suppose you get there, take someone and bring in 2015, to show how it is here. We are not able to understand what it would be like for him to see all these shiny capsules flying along the roads; talk with people across the ocean; look at sports games a thousand kilometers away; hear a musical performance recorded 50 years ago; play with a magic rectangle that can take a picture or capture a live moment; build a map with a paranormal blue dot indicating its location; look at someone’s face and communicate with him for many kilometers and so on. All this is inexplicable magic for almost three hundred years old people. Not to mention the Internet, the International Space Station, the Large Hadron Collider, the nuclear weapons and general theory of relativity.

Such an experience for him will not be surprising or shocking - these words do not convey the essence of mental collapse. Our traveler may even die.

But there is an interesting point. If he returns to the 1750 year and he becomes jealous that we want to look at his reaction to the 2015 year, he can take a time machine with him and try to do the same, say, the 1500 year. Arrive there, find a person, pick up the year in 1750 and show it all. The guy from 1500, the year will be shocked immensely - but is unlikely to die. Although he will certainly be surprised, the difference between 1500 and 1750 year is much less than between 1750 and 2015. A man from 1500 of the year will be surprised at some moments from physics, will be amazed at what Europe has become under the tough fifth of imperialism, will draw in his head a new map of the world. But 1750’s daily life — transportation, communications, and so on — is unlikely to surprise him to death.

No, for the guy from 1750 to have fun just like him and me, he should go a lot further - perhaps a year like that in 12 000 BC. e., even before the first agricultural revolution allowed the emergence of the first cities and the concept of civilization. If someone from the world of hunter-gatherers, from the time when people were still another animal species, saw the huge human empires of 1750, with their high churches, ships crossing the oceans, their concept of being “inside” the building, everything this knowledge - he would have died, most likely.

And then, after death, he would envy and wanted to do the same. Would return to 12 000 years ago, in 24 000 year BC. er., would take a man and dragged him in his time. And the new traveler would say to him: “Well, that’s ok, thank you.” Because in this case, a man from 12 000 year BC. er one would have to go back to 100 000 years ago and show the local aborigines the fire and language for the first time.

If we need to transport someone to the future, so that he was surprised to death, the progress must pass a certain distance. Mortal Progress Point (TSP) must be achieved. That is, if at the time of hunter-gatherers TSP occupied 100 000 years, the next stop took place already in 12 000 BC. er Behind it, progress was already faster and radically transformed the world to the 1750 year (approximately). Then it took a couple of hundred years, and here we are.

This picture - when human progress moves faster as time goes by - futurologist Ray Kurzweil calls the law the accelerating returns of human stories. This happens because more advanced societies have the ability to move progress at a faster rate than less developed societies. The people of the 19 century knew more than the people of the 15 century, so it is not surprising that progress in the 19 century went faster than in the 15 century, and so on.

On a smaller scale, this also works. The film "Back to the Future" was released in the 1985 year, and the "past" was in the 1955 year. In the film, when Michael J. Fox returned to 1955 the year, he was taken aback by the novelty of televisions, the price of soda, the lack of love for guitar sound and variations in slang. It was a different world, of course, but if the film was shot today, and the past was in 1985, the difference would be much more global. Marty McFly, a thing of the past from the time of personal computers, the Internet, mobile phones, would be much more out of place than Marty, who went to 1955 from 1985.

All this is due to the law of accelerating returns. The average speed of progress between 1985 and 2015 years was higher than the speed from 1955 to 1985 years - because in the first case the world was more developed, it was saturated with the achievements of the last 30 years.

Thus, the more achievements, the faster changes occur. But shouldn't this leave us some hints for the future?

Kurzweil suggests that the progress of the entire 20 century could have been completed in just 20 years at the level of 2000 of the year - that is, in 2000, the rate of progress was five times higher than the average rate of progress of the 20 century. He also believes that the progress of the entire 20 century was equivalent to the progress of the period from 2000 to 2014 year, and the progress of another 20 century would be equivalent to the period before 2021 of the year - that is, in just seven years. After several decades, all the progress of the 20 century will take place several times a year, and then - in just a month. Ultimately, the law of accelerating returns will bring us to the point that over the entire 21 century, progress will be 1000 times the progress of the 20 century.

If Kurzweil and his supporters are right, the 2030 year will surprise us just as the 1750 guy would have surprised our 2015 - that is, the next TSP will only take a couple of decades - and the 2050 world of the year will be so different from the modern one that we hardly will find out. And this is not fiction. So believes many scientists who are smarter and more educated than you and me. And if you look at the story, you will understand that this prediction is derived from pure logic.

Why, then, when we are confronted with statements like “the world will change beyond recognition in 35 years,” we are skeptical about our shoulders? There are three reasons for our skepticism about future predictions:

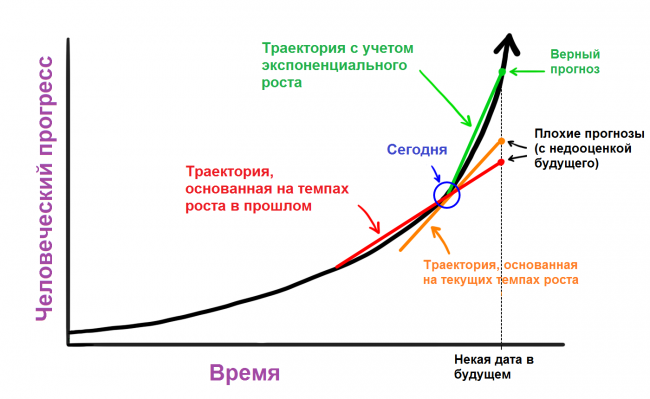

1. When it comes to history, we think in straight chains. Trying to present the progress of the next 30 years, we look at the progress of previous 30 as an indicator of how much everything is likely to happen. When we think about how our world will change in the 21 century, we take the progress of the 20 century and add it to the 2000 year. The same mistake is made by our guy from 1750, when he gets someone from 1500, and tries to surprise him. We intuitively think in a linear fashion, although we should be exponential. Essentially, the futurologist should try to predict the progress of the next 30 years, not looking at the previous 30, but judging by the current level of progress. Then the forecast will be more accurate, but still past the gate. To think about the future correctly, you need to see the movement of things at a much faster pace than they are now.

[/ Center]

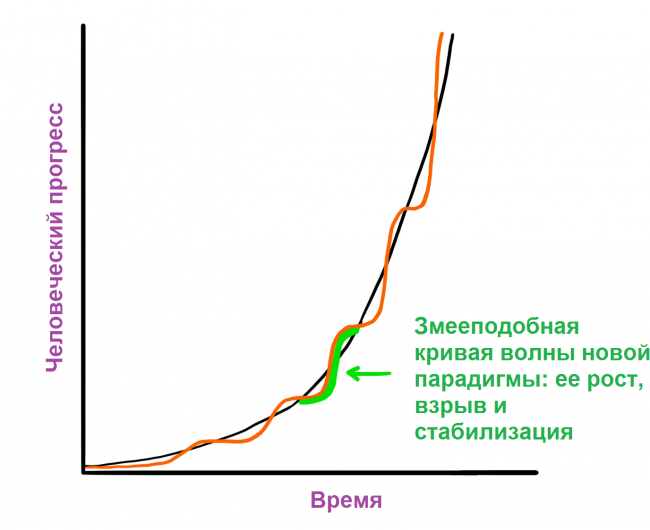

[/ Center]2. The trajectory of recent history often looks distorted. First, even a steep exponential curve seems linear when you see small parts of it. Secondly, exponential growth is not always smooth and uniform. Kurzweil believes that progress is moving snake-like curves.

This curve goes through three phases: 1) slow growth (early phase of exponential growth); 2) rapid growth (explosive, late phase of exponential growth); 3) stabilization in the form of a specific paradigm.

If you look at the last story, the part of the S-curve in which you are currently located can hide the speed of progress from your perception. Part of the time between 1995 and 2007 years was spent on the explosive development of the Internet, the presentation of Microsoft, Google and Facebook to the public, the birth of social networks and the development of cell phones, and then smartphones. This was the second phase of our curve. But the period from 2008 to 2015 was a less breakthrough year, at least on the technological front. Those who think about the future today may take the last couple of years to assess the overall pace of progress, but they do not see the bigger picture. In fact, the new and powerful 2 phase can be brewing now.

3. Our own experiences make us grumbling old men when it comes to the future. We base our ideas about the world on our own experience, and this experience has set the growth rate in the recent past for us as "taken for granted." Our imagination is also limited, because it uses our experience to predict - but more often we simply do not have the tools that allow us to accurately predict the future. When we hear predictions for the future that diverge from our daily perception of the work of things, we instinctively consider them naive. If I told you that you will live to 150 or 250 years, and maybe you will not die at all, you will instinctively think that "this is stupid, I know from history that everyone died during this time." So it is: no one lived to such years. But no aircraft flew until the invention of the aircraft.

Thus, while skepticism seems reasonable to you, it is most often wrong. We should accept that if we are armed with pure logic and we are waiting for the usual historical zigzags, we must recognize that very, very, very much has to change in the coming decades; much more than you can imagine intuitively. The logic also suggests that if the most advanced view of the planet continues to make giant leaps forward, faster and faster, at some point the jump will be so serious that it will radically change the life we know it. Something similar happened in the process of evolution, when man became so clever that he completely changed the life of any other species on planet Earth. And if you spend a little time reading what is happening now in science and technology, you may begin to see certain clues about what the next giant leap will be.

The path to superintelligence: what is AI (artificial intelligence)?

Like many on this planet, you are used to thinking that artificial intelligence is a stupid idea of science fiction. But lately, a lot of serious people have shown concern about this stupid idea. What's wrong?

There are three reasons that lead to confusion around the term AI:

We associate AI with movies. "Star Wars". "Terminator". "Space Odyssey 2001". But like RobotsThe AI in these films is fiction. Thus, Hollywood films dilute the level of our perception, AI becomes familiar, familiar and, of course, evil.

This is a wide field for application. It starts with a calculator in your phone and the development of self-driving cars and comes to something far in the future that will drastically change the world. AI means all of these things, and it is confusing.

We use AI every day, but often we do not even give ourselves a report on this. As John McCarthy said, the inventor of the term “artificial intelligence” in 1956, “as soon as he started working, no one else calls him AI”. AI has become more like a mythical prediction about the future, rather than something real. At the same time, this title also has a taste of something from the past that has never become a reality. Ray Kurzweil says that he hears people associating AI with facts from 80's, which can be compared with "the statement that the Internet died with dotcoms in the beginning of 2000's."

Let's be clear. First, stop thinking about robots. A robot that is a container for AI sometimes mimics the human form, sometimes it does not, but the AI itself is a computer inside the robot. The AI is the brain, and the robot is the body, if it has this body at all. For example, Siri software and data is artificial intelligence, a woman’s voice is the personification of this AI, and there are no robots in this system.

Secondly, you must have heard the term "singularity" or "technological singularity." This term is used in mathematics to describe an unusual situation where ordinary rules no longer work. In physics, it is used to describe the infinitely small and dense point of a black hole or the original point of the Big Bang. Again, the laws of physics do not work in it. In 1993, Vernor Vinge wrote a famous essay in which he applied this term to the moment in the future when the intelligence of our technologies surpasses our own - and at that moment life as we know it will change forever and the usual rules of its existence will no longer work . Ray Kurzweil further clarified this term, indicating that the singularity will be achieved when the law of accelerating returns reaches an extreme point, when technological progress will move so fast that we will stop noticing its achievements, almost infinitely quickly. Then we will live in a completely new world. However, many experts have stopped using this term, so let us and we will not refer to it often.

Finally, although there are many types or forms of AI that derive from the broad notion of AI, its main categories depend on caliber. There are three main categories:

Narrowly directed (weak) artificial intelligence (AII). CII specializes in one area. Among such AI there are those who can beat the world chess champion, but that's all. There is one that can offer the best way to store data on a hard disk, and that’s it.

General (strong) artificial intelligence. Sometimes also called human-level AI. AIS is referred to as a computer that is intelligent, like a person — a machine that is capable of performing any intellectual action inherent in man. It is much more difficult to create an OII than an AII, and so far we have not reached it. Professor Linda Gottfredson describes intelligence as “in a general sense, psychic potential, which, along with other things, includes the ability to reason, plan, solve problems, think abstractly, understand complex ideas, learn quickly and learn from experience.” OII should be able to do all this as easily as you do.

Artificial superintelligence (ICI). The Oxford philosopher and theorist of AI, Nick Bostrom, defines superintelligence as “intellect, which is much smarter than the best human minds in almost any field, including scientific creativity, general wisdom and social skills”. Artificial super-intelligence includes both a computer that is a little bit smarter than a person, and one that is trillions smarter in any direction. The ISI is the reason for the growing interest in AI, as well as the fact that in such discussions the words “extinction” and “immortality” often appear.

Nowadays, people have already conquered the very first step of the AI caliber - AII - in many ways. The AI revolution is the path from AII through IES to CII. We may not survive this path, but it will definitely change everything.

Let's take a close look at how leading thinkers in this area see this path and why this revolution can happen faster than you might think.

Where are we in this stream?

Narrow-focused artificial intelligence is machine intelligence, which is equal to or exceeds human intelligence or efficiency in performing a specific task. A few examples:

* Cars are jam-packed with AII systems, from computers that determine when the anti-lock braking system should work, to a computer that determines the parameters of the fuel injection system. Google’s self-driving cars, which are currently undergoing testing, will contain robust FID systems that will perceive and respond to the world around them.

* Your phone is a small UII factory. When you use the maps app, get recommendations for downloading apps or music, check the weather for tomorrow, speak with Siri, or do something else, you are using PCB.

* Your email spam filter is a classic type of AII. He begins by figuring out how to separate spam from usable emails, and then learns how to process your emails and preferences.

* And this is an embarrassing feeling when yesterday you were looking for a screwdriver or a new plasma in a search engine, and today you see offers of helpful stores on other sites? Or when in the social network you are recommended to add interesting people as friends? All these are FIA systems that work together, determining your preferences, fusing information about you from the Internet, getting closer and closer to you. They analyze the behavior of millions of people and draw conclusions based on these analyzes so as to sell the services of large companies or make their services better.

* Google Translate is another classic AII system, impressively good at certain things. Voice recognition - too. When your plane lands, the terminal is not determined for him by humans. Ticket price - too. The best in the world of checkers, chess, backgammon, bald and other games today are represented by highly targeted artificial intelligence.

* Google search is one giant AIM that uses incredibly clever methods to rank pages and determine the results of search results.

And this is only in the consumer world. Complex FID systems are widely used in the military, manufacturing and financial industries; in medical systems (remember IBM's Watson) and so on.

UIA systems in this form do not pose a threat. In the worst case, a buggy or poorly programmed AII can lead to local disaster, create power outages, derail financial markets, and the like. But although AII does not have the authority to create an existential threat, we must see things more broadly — a crushing hurricane awaits us, foreshadowed by AII. Each new innovation in the field of AII adds one block to the path leading to the AIS and CII. Or, as Aaron Sayents well noted, the AII of our world is similar to the “amino acids of the primary broth of the young Earth” - while the non-living components of life that one day wake up.

The path from AII to OII: why is it so difficult?

Nothing reveals the complexity of human intelligence, as an attempt to create a computer that will be just as smart. Building skyscrapers, flying into space, the secrets of the Big Bang are all nonsense compared to repeating our own brain or at least just understanding it. Currently, the human brain is the most complex object in the known Universe.

Perhaps you do not even suspect the difficulty of creating an OII (a computer that will be smart, like a person, in general, and not just in one area). Creating a computer that can multiply two ten-digit numbers in a split second is easier than ever. It is incredibly difficult to create one who can look at a dog and a cat and say where the dog is and where the cat is. Create an AI that can beat the grandmaster? Made by Now try to get him to read a paragraph from a book for six-year-old children and not only understand the words, but also their meaning. Google is spending billions of dollars trying to do this. With complex things - like computing, calculating strategies of financial markets, translating a language - the computer copes with it easily, but with simple things - sight, movement, perception - no. As Donald Knut put it, “AI now does almost everything that requires“ thinking ”, but cannot cope with what people and animals do without thinking.”

When you think about the reasons for this, you will understand that things that seem to us the simplest in execution, only seem so, because they have been optimized for us (and animals) in the course of hundreds of millions of years of evolution. When you stretch your hand to an object, the muscles, joints, bones of your shoulders, elbows and hands instantly perform long chains of physical operations that are in sync with what you see and move your hand in three dimensions. It seems simple to you, because the perfect software of your brain is responsible for these processes. This simple trick allows you to make the procedure for registering a new account with entering a crookedly written word (captcha) simple for you and a hell for a malicious bot. For our brain, this is nothing complicated: you just need to be able to see.

On the other hand, the multiplication of large numbers or the game of chess are new types of activity for biological beings, and we did not have enough time to perfect ourselves in them (not millions of years), so the computer is easy to beat. Just think about it: would you prefer to create a program that can multiply large numbers, or a program that recognizes the letter B in its millions of spellings, in the most unpredictable fonts, by hand or stick in the snow?

One simple example: when you look at it, you and your computer understand that these are alternating squares of two different shades.

But if you remove black, you will immediately describe the full picture: cylinders, planes, three-dimensional angles, but the computer will not be able to.

He will describe what he sees as a variety of two-dimensional forms in different shades, which, in principle, is true. Your brain is doing a ton of work, interpreting the depth, the play of shadows, the light in the picture. Below in the picture the computer will see a two-dimensional white-gray-black collage, whereas in reality there is a three-dimensional stone.

And all that we have just identified, it is the tip of the iceberg relating to the understanding and processing of information. To get to the same level with a person, a computer must understand the difference in subtle facial expressions, the difference between pleasure, sadness, satisfaction, joy, and why Chatsky is good, and Molchalin - not.

What to do?

The first step to creating OII: increasing computational power

One of the necessary things that must happen in order for the AIS to become possible is an increase in the power of computer equipment. If an artificial intelligence system needs to be as smart as a brain, it needs to match the brain with raw computational power.

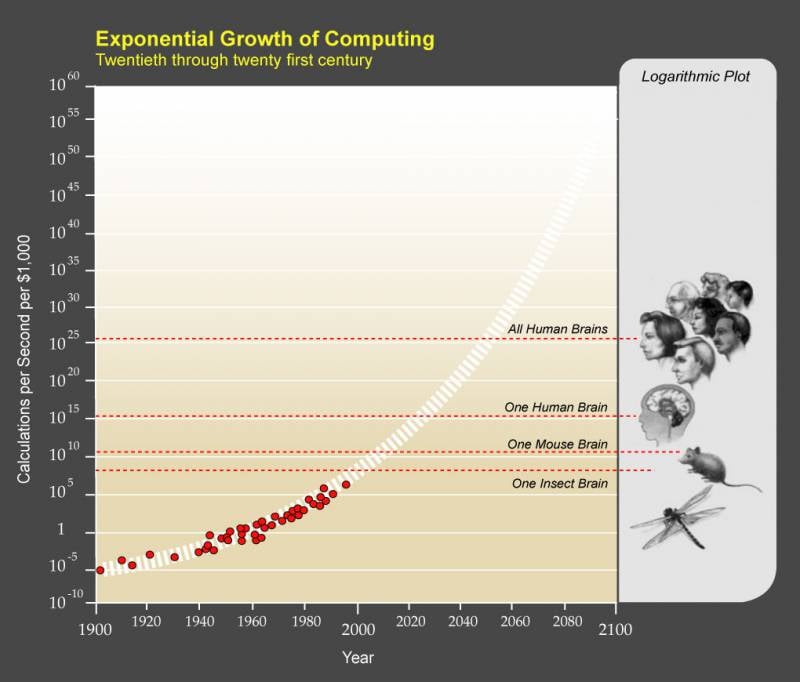

One way to increase this ability is in the total number of calculations per second (OPS) that the brain can produce, and you can determine this number by finding out the maximum number of OPS for each brain structure and putting them together.

Ray Kurzweil came to the conclusion that it is enough to take a professional assessment of the OPS of one structure and its weight relative to the weight of the whole brain, and then multiply proportionally to get an overall assessment. It sounds a bit doubtful, but he did it many times with different estimates of different areas and always came to the same number: of the order of 10 ^ 16, or 10 quadrillion OPS.

The fastest supercomputer in the world, the Chinese Tianhe-2, has already bypassed this number: it is able to perform 32 quadrillion operations per second. But Tianhe-2 occupies 720 square meters of space, eats 24 megawatts of energy (our brain consumes just 20 watts) and costs 390 million dollars. Commercial or widespread use is not in question.

Kurzweil suggests that we evaluate the state of computers by how many OPS you can buy for 1000 dollars. When this number reaches the human level - 10 quadrillion OPS - OII may well become part of our lives.

Moore's Law - a historically reliable rule that determines that the maximum computing power of computers is doubled every two years - implies that the development of computer technology, like the movement of a person through history, grows exponentially. If we compare this with the rule of thousands of Kurzweil dollars, we can now afford 10 trillions of OPS for 1000 dollars.

Computers for 1000 dollars by their computational abilities bypass the mouse brain and a thousand times weaker than humans. This seems like a bad indicator until we remember that computers were a trillion times weaker than the human brain in 1985, in a billion - in 1995, and in a million - in 2005. By 2025, we have to get an affordable computer that is not inferior in computing power to our brain.

Thus, the raw power required for OII is already technically available. Within 10 years, it will come out of China and spread around the world. But computing power alone is not enough. And the next question: how can we provide the human level intelligence with all this power?

The second step to create OII: give it a reason

This part is quite complex. To tell the truth, no one really knows how to make a car intelligent - we are still trying to figure out how to create a human-level mind that can distinguish a cat from a dog, highlight B drawn in the snow, and analyze a second-rate film. However, there are a handful of forward-thinking strategies, and one fine moment one of them should work.

1. Repeat brain

This option is similar to the fact that scientists are sitting in the same class with a child who is very clever and responds well to questions; and even if they diligently try to comprehend science, they do not even catch up with the clever child. In the end, they decide: to hell, just write off the answers to his questions. This makes sense: we cannot create a highly complex computer, so why not take one of the best prototypes of the universe as a basis: our brain?

The scientific world is working hard, trying to figure out how our brain works and how evolution has created such a complex thing. According to the most optimistic estimates, they will succeed only by 2030 year. But as soon as we understand all the secrets of the brain, its effectiveness and power, we can be inspired by its methods in creating technologies. For example, one of the computer architectures that mimics the work of the brain is the neural network. She starts with a network of transistors "neurons" connected to each other by input and output, and does not know anything - like a newborn. The system "learns", trying to perform tasks, recognize handwritten text and the like. The connections between the transistors are strengthened in the case of the correct answer and weakened in the event of an incorrect one. After many cycles of questions and answers, the system forms intelligent neural weaves that are optimized for certain tasks. The brain learns in a similar way, but in a much more complex manner, and as we continue to study it, we discover new incredible ways to improve neural networks.

Even more extreme plagiarism involves a strategy called full brain emulation. The goal: to cut a real brain into thin plates, scan each of them, then accurately restore the three-dimensional model using software, and then translate it into a powerful computer. Then we will have a computer that will officially be able to do everything the brain can do: it will just need to learn and collect information. If engineers succeed, they will be able to emulate a real brain with such incredible accuracy that, after downloading to the computer, the real identity of the brain and its memory will remain intact. If the brain belonged to Vadim before he died, the computer will wake up in the role of Vadim, who will now be the OII of the human level, and we, in turn, will transform Vadim into an incredibly intelligent ICI, which he will surely be pleased with.

How far are we from complete brain emulation? In truth, we just emulated the brain of a millimeter flatworm that contains the 302 neuron in total. The human brain contains 100 billions of neurons. If attempts to get to this number seem useless to you, remember the exponential growth rate of progress. The next step will be emulation of the brain of an ant, then there will be a mouse, and then there will be a stone's throw to the person.

2. Try to follow in the footsteps of evolution.

Well, if we decide that the answers of an intelligent child are too complex to write off, we can try to follow in its footsteps of training and exam preparation. What do we know? Building a computer as powerful as the brain is quite possible — the evolution of our own brain has proven this. And if the brain is too complex to emulate, we can try to emulate evolution. The fact is that even if we can emulate a brain, it can be like an attempt to build an airplane by ridiculous waving of hands, repeating the movements of the wings of birds. Most often we manage to create good machines using a machine-oriented approach, rather than an exact imitation of biology.

How to simulate evolution in order to build OII? This method called “genetic algorithms” should work like this: there must be a productive process and its evaluation, and this will be repeated again and again (just as biological beings “exist” and “are evaluated” by their ability to reproduce). A group of computers will perform tasks, and the most successful of them will share their characteristics with other computers, “output”. Less successful will be mercilessly thrown into the dustbin of history. After many, many iterations, this process of natural selection will allow to bring out the best computers. The difficulty lies in the creation and automation of the derivation and evaluation cycles, so that the evolutionary process goes on its own.

The disadvantage of copying evolution is that evolution takes billions of years to do something, and we only need a few decades to do it.

But we have a lot of advantages, unlike evolution. Firstly, it does not have the gift of foresight, it works by chance — it produces useless mutations, for example, and we can control the process within the framework of the tasks set. Secondly, evolution does not have a goal, including the pursuit of intelligence - sometimes in the environment some kind of gains are not at the expense of intelligence (because the latter consumes more energy). We, on the other hand, can aim at increasing intelligence. Thirdly, in order to choose intelligence, evolution needs to make a number of third-party improvements - like redistributing energy consumption by cells - we can simply remove the excess and use electricity. Without a doubt, we will be faster than evolution - but again, it is not clear whether we can surpass it.

3. Provide computers for yourself

This is the last chance when scientists completely despair and try to program a program for self-development. However, this method may be the most promising of all. The idea is that we create a computer that will have two main skills: explore AI and code changes in itself — which will allow it not only to learn more, but also to improve its own architecture. We can train computers to be computer engineers to themselves so that they develop themselves. And their main task will be to figure out how to become smarter. We will talk more about this later.

All this can happen very soon.

The rapid development of hardware and software experiments run in parallel, and the AIS can appear quickly and unexpectedly for two main reasons:

1. The exponential growth is intensive, and what seems to be snail's steps can quickly turn into leaps and bounds - this gif well illustrates this concept:

2. When it comes to software, progress may seem slow, but then one breakthrough instantly changes the speed of moving forward (a good example: in times of geocentric world perception, it was difficult for people to calculate the work of the universe, but the discovery of heliocentrism made everything much simpler). Or, when it comes to a computer that improves itself, everything may seem extremely slow, but sometimes only one amendment in the system separates it from the thousandfold efficiency compared to the person or the previous version.

Road from OII to ICI

At a certain point, we will definitely get OII - general artificial intelligence, computers with a general human level of intelligence. Computers and people will live together. Or will not.

The fact is that OII with the same level of intelligence and computing power as a person will still have significant advantages over people. For example:

Equipment

Speed. Brain neurons operate at a frequency of 200 Hz, while modern microprocessors (which are significantly slower than what we get at the time of the creation of the OII) operate at a frequency of 2 GHz, or 10 millions of times faster than our neurons. And the internal communications of the brain, which can move at a speed of 120 m / s, are significantly inferior to the ability of computers to use optics and the speed of light.

Size and storage. The size of the brain is limited by the size of our skulls, and it can’t become larger, otherwise it will take too long for internal communications at 120 speeds to travel from one structure to another. Computers can expand to any physical size, use more equipment, increase RAM, long-term memory - all this goes beyond our capabilities.

Reliability and durability. Not only computer memory is more human. Computer transistors are more accurate than biological neurons and are less prone to deterioration (and in general, can be replaced or repaired). People’s brains get tired faster, computers can work non-stop, 24 hours a day, 7 days a week.

Software

The ability to edit, upgrade, a wider range of possibilities. Unlike the human brain, a computer program can be easily repaired, updated, conducted an experiment with it. Modernization may also be subject to areas in which the human brain is weak. The software of the person responsible for the vision is superbly arranged, but from the point of view of engineering, his abilities are still very limited - we see only in the visible spectrum of light.

Collective ability. People are superior to other species in terms of a grand collective mind. Starting from the development of the language and the formation of large communities, moving through the invention of writing and printing, and now being activated by using tools such as the Internet, the collective mind of people is an important reason why we can magnify the crown of evolution. But computers will still be better. A global network of artificial intelligence, working on one program, constantly synchronized and self-developing, will allow you to instantly add new information to the database, no matter where you get it. Such a group will also be able to work on one goal, as a whole, because computers do not suffer from the presence of special opinion, motivation and personal interest, like people.

The AI, which will most likely become OII through programmed self-improvement, will not see the “human-level intellect” as an important milestone - this milestone is important only for us. He will have no reason to stop at this dubious level. And given the advantages that even the human-level OII will have, it is quite obvious that human intelligence will become for him a short flash in the race for superiority intellectually.

This development may surprise us very, very much. The fact is that, from our point of view, a) the only criterion that allows us to determine the quality of intelligence is animal intelligence, which is lower than ours by default; b) for us, the smartest people are ALWAYS smarter than the most stupid. Like that:

That is, while the AI is simply trying to reach our level of development, we see how it becomes smarter, approaching the level of the animal. When he gets to the first human level - Nick Bostrom uses the term “village idiot” - we will be delighted: “Wow, he's already like a moron. Cool! ". The only thing is that in the general spectrum of people's intelligence, from the village idiot to Einstein, the range is small - so after the AI gets to the level of the fool and becomes OII, he will suddenly become smarter than Einstein.

And what will happen next?

Explosion of intelligence

I hope you find it interesting and fun, because it is from this point that the topic we are discussing becomes abnormal and creepy. We should pause and remind ourselves that every fact stated above and further is a real science and real predictions for the future expressed by the most eminent thinkers and scientists. Just keep in mind.

So, as we designated above, all our modern models on achievement of OII include an option when AI improves itself. And as soon as he becomes an OII, even the systems and methods with which he grew up become smart enough to self-improve - if they wish. An interesting concept arises: recursive self-improvement. It works like this.

A certain AI system at a certain level — say, a village idiot — is programmed to improve its own intelligence. Having developed - say, to the level of Einstein - such a system begins to evolve with the intelligence of Einstein, it takes less time to develop, and the leaps are all greater. They allow the system to surpass any person, becoming more and more. As it progresses rapidly, OII soars up to heavenly heights in its intellectuality and becomes the supramental system of ISI. This process is called the explosion of the intellect, and this is the clearest example of the law of accelerating returns.

Scientists argue about how quickly AI will reach the level of OII - the majority believes that OII we will get to 2040 year, in just 25 years, which is very, very little by the standards of technology development. Continuing the logical chain, it is easy to assume that the transition from OII to IIS will also take place extremely quickly. Like that:

To characterize a superintelligence of this magnitude, we do not even have suitable terms. In our world, “smart” means a person with IQ 130, “stupid” - 85, but we have no examples of people with IQ 12 952. Our rulers are not designed for this.

The history of mankind tells us clearly and clearly: together with the intellect, power and strength appear. This means that when we create an artificial superintelligence, it will be the most powerful creature in the history of life on Earth, and all living beings, including humans, will be entirely in his power - and this can happen in twenty years.

If our meager brains were able to come up with Wi-Fi, then something smarter than us a hundred, a thousand, a billion times with ease will be able to calculate the position of each atom in the universe at any given time. All that can be called magic, any power attributed to an omnipotent deity, will all be at the disposal of the ISI. Creating a technology to reverse aging, curing any disease, getting rid of hunger and even death, managing the weather - all of a sudden becomes possible. It is also possible and the immediate end of all life on Earth. The cleverest people of our planet agree that as soon as an artificial superintelligence appears in the world, this will mark the appearance of God on Earth. And the important question remains.

Will he be a good god?

Based on waitbutwhy.com, compiled by Tim Urban. The article uses materials from Nick Bostrom, James Barrat, Ray Kurzweil, Jay Niels-Nilsson, Stephen Pinker, Vernor Vinge, Moshe Vardy, Russ Roberts, Stuart Armstroh and Kai Sotal, Susan Schneider, Stuart Russell and Peter Norwig Tete, Tete, Tete, Armstrong, Tee Sthal, Schneider, Stewart Russell, Peter Norwig, Tete, Tete, Armstrong Marcus, Carl Schulman, John Searle, Jaron Lanier, Bill Joy, Kevin Keli, Paul Allen, Stephen Hawking, Kurt Andersen, Mitch Kapor, Ben Herzel, Arthur Clarke, Hubert Dreyfus, Ted Greenwald, Jeremy Howard.

Information